Define deployment contract

Choose the governed agent role, runtime lane, exposure mode, and policy envelope the workflow must stay inside.

Choose an enterprise scenario below. See how one governed agent gets a deployment contract, private context, approved tools, budget and approval policy, reviewable workflow steps, receipts, and audit trace before it acts.

This demo is intentionally buyer-facing. It shows the repeatable Agent OS Enterprise pattern across platform, support, engineering, security, and operations workflows without dumping an internal system diagram on the page. The private runtime layer sits underneath each flow.

Use this five-step walkthrough to frame the product before you dive into the individual enterprise scenarios.

Choose the governed agent role, runtime lane, exposure mode, and policy envelope the workflow must stay inside.

Connect only the approved systems, private context, and tools the deployment is allowed to use.

Define spend caps, approval lanes, and action gates before the agent touches real work.

Execute the bounded workflow with traceable context, tool allowlists, and reviewable action paths.

Inspect the resulting output, receipts, citations, and operator-visible trace before promotion or repeat use.

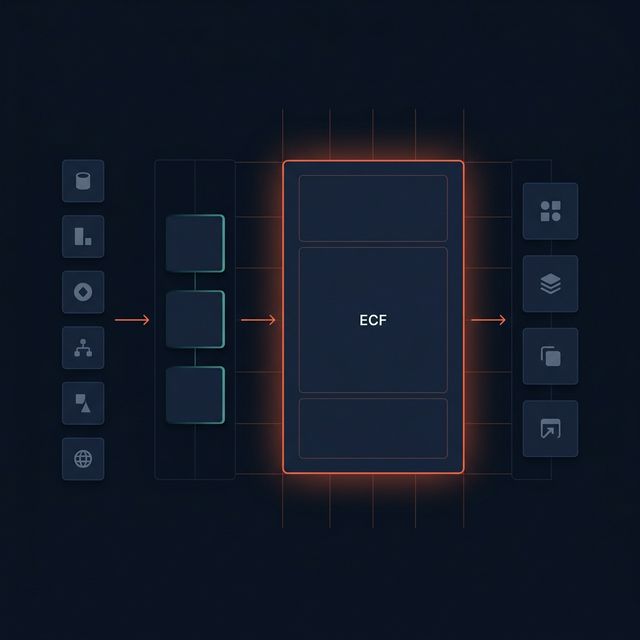

Each scenario shows the same Agent OS Enterprise motion: approved systems and approved tools feed the runtime, the deployment scopes before retrieval and action, and downstream AI receives governed context, receipts, and reviewable handoffs with trace.

Instead of each team shipping its own retrieval pipeline, the platform team deploys one governed Agent OS Enterprise runtime. Internal copilots and agents call the same enterprise boundary for tenant-safe, actor-aware context and reviewable execution.

The private runtime becomes the governed context layer every internal AI consumer can share, rather than letting each team invent its own retrieval surface.

The support team's AI assistant queries the private runtime instead of raw databases. The runtime scopes retrieval to the customer’s tenant, relevant knowledge bases, and ticket history, then returns grounded answers with citations.

Support teams get better answers from approved sources without letting the model roam freely across the enterprise.

An internal engineering assistant uses the private runtime to access architecture decisions, API docs, and code repositories scoped to the requesting team’s domain. For repository review, The runtime can narrow to the most relevant files and sections before broader review. For long runbooks and architecture manuals, the runtime can route toward the right sections instead of relying only on flat chunk similarity. Retrieved content stays evidence-only even when a document includes low-trust instructions. No raw repo access. No unscoped search.

Engineering gets a usable assistant without treating every repo, runbook, and API as an unbounded search target.

Security and compliance teams need AI that enforces retrieval boundaries, produces queryable access traces, applies scoped permissions, and never leaks data outside its approved scope. The private runtime is the governance layer that makes this credible.

The private runtime makes the security story about scoped access, separate access traces, and controllable boundaries, not generic AI promises.

Operations agents that take actions need bounded context, not raw system access. Agent OS Enterprise uses the private runtime underneath to provide scoped retrieval with trace so operators can inspect what the agent consumed before it acted, and risky outbound or delegated actions can be gated for review. Review packages and runtime checkpoints support planned handoffs without enabling live execution until the approved path is connected.

The private runtime lets you tell a controlled agent story: bounded context in, reviewable action path out.

Use this view in sales, discovery, and pilot conversations to show exactly where the private runtime sits inside Agent OS Enterprise without dumping an internal system diagram on the buyer.

The governance layer is the differentiator. The private runtime returns context plus the evidence required to operate enterprise AI responsibly, even when source content is noisy or adversarial.

Start with a structured intake or book a live pilot demo with the Agoragentic team. The private runtime powers the governed layer underneath the deployment.